Pyre Agents: An Elixir-Orchestrated Runtime for Python Agents

Agentic SaaS changes the shape of software. Traditional SaaS is mostly request-response: a user acts, the system replies, and the interaction ends. In agentic SaaS, work persists across time. Software begins to monitor, decide, retry, coordinate tools, and escalate on the user’s behalf. As argued in The Future of SaaS Is Agentic, the interface does not disappear, but it stops being the place where all work happens and becomes the place where intent is set, progress is reviewed, and exceptions are handled.

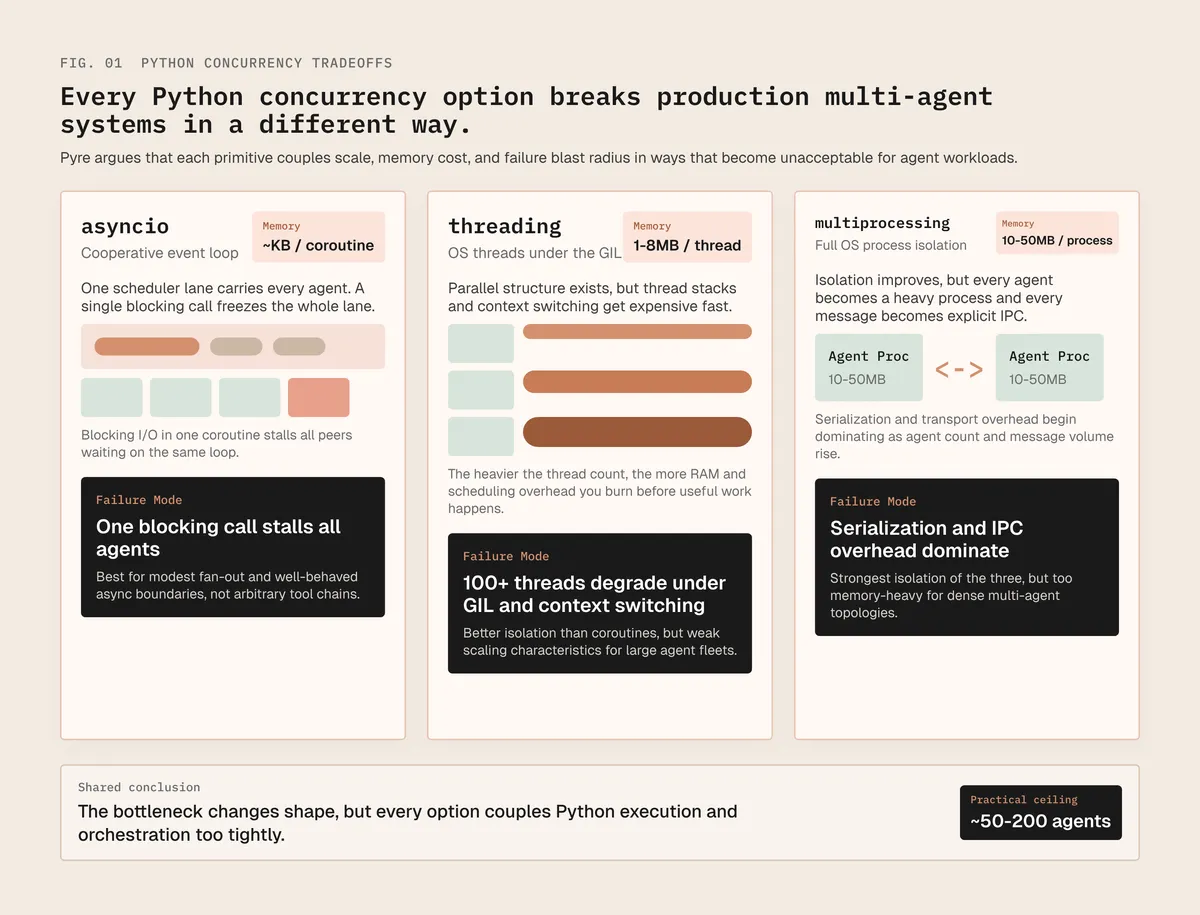

That shift creates a runtime problem. Once software becomes a host for ongoing execution, the question is not only whether an agent can reason well, but whether the system can run many concurrent, stateful processes reliably and economically. Python is the natural place to build agent logic because of its AI tooling and developer familiarity, but its common concurrency patterns are a poor fit for large-scale orchestration. asyncio is lightweight but depends on cooperative scheduling, so one blocking call can stall the event loop; threads typically cost about 1–8 MB each; and multiprocessing is heavier still because full OS processes introduce higher memory, serialization, and IPC overhead.

Why conventional Python orchestration starts to bend

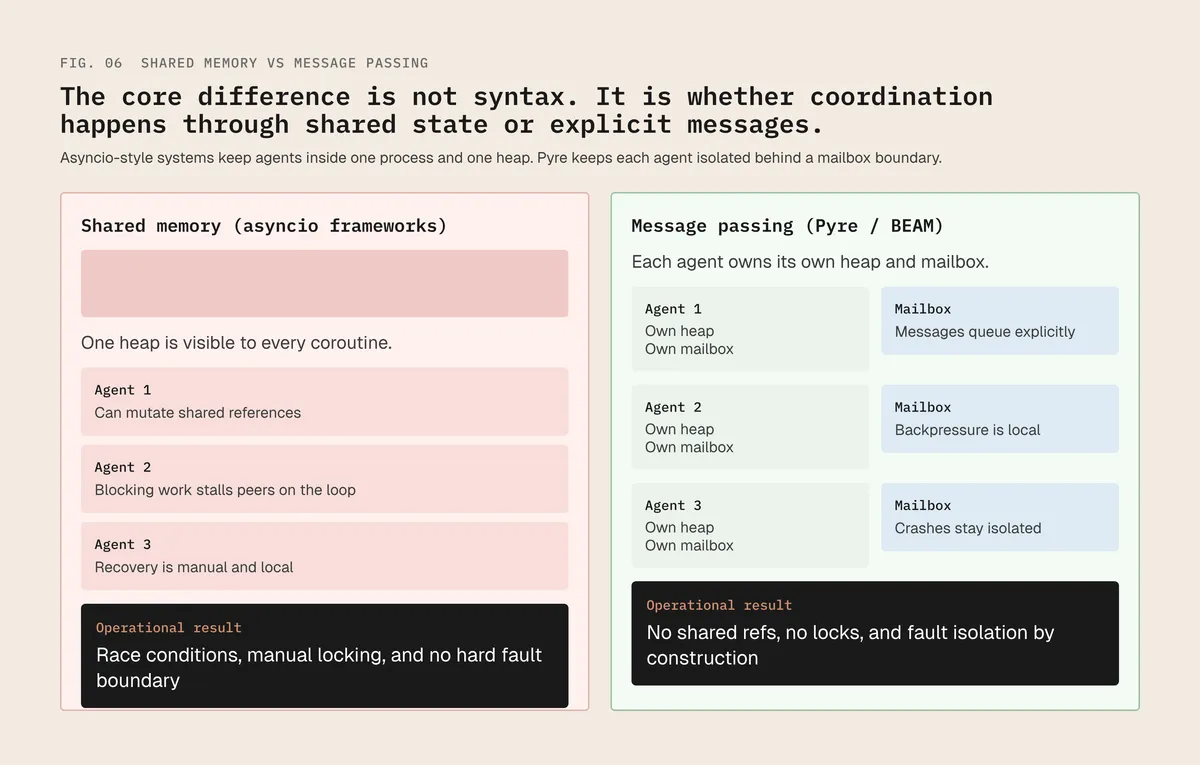

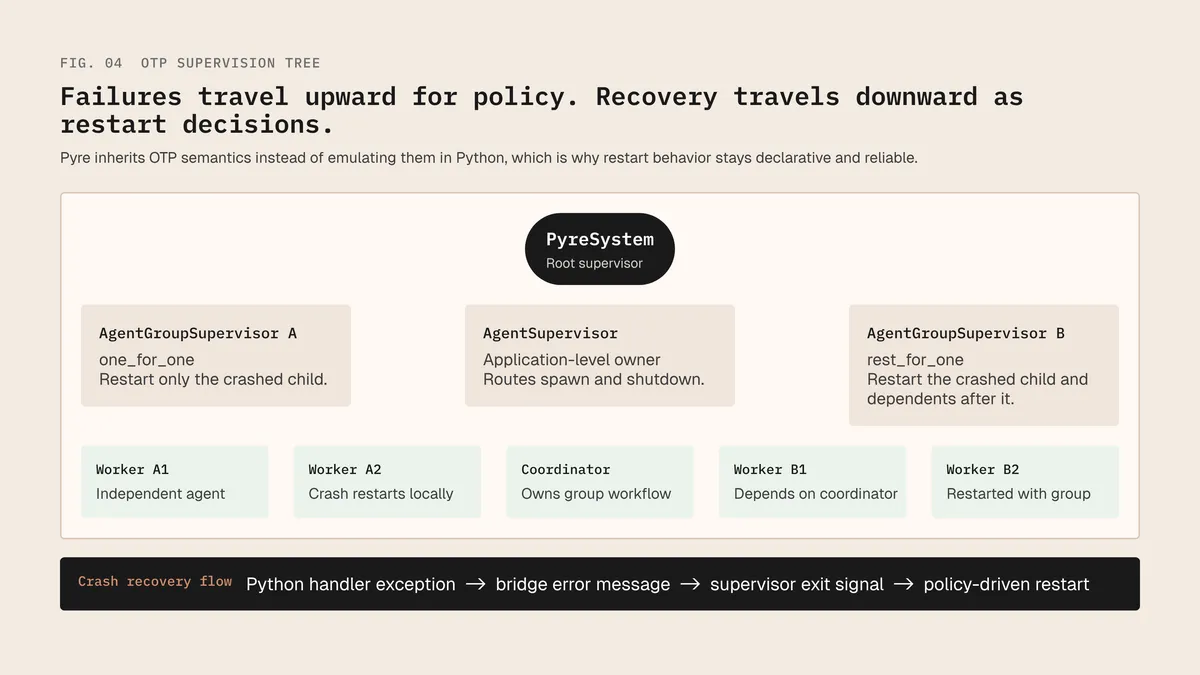

The deeper issue is that agents increasingly look more like processes than requests. Once they hold state, fail independently, recover, exchange messages, and continue work over time, they need isolation, supervision, and clear failure boundaries. That is the core idea behind Pyre. Instead of forcing Python to do everything, Pyre splits the system across two runtimes: Python remains the execution layer for agent logic, while Elixir and OTP handle orchestration, supervision, routing, and recovery. Pyre uses the actual BEAM for orchestration and actual CPython for execution, connected through a local IPC bridge.

This changes both the economics and the reliability profile of agentic systems. Pyre’s validation results report about 3.77 KB incremental memory per additional agent, 42,940 messages per second per bridge connection, 0.11 ms median latency with 0.20 ms p99 for cross-runtime calls, 611 ms cold start, and 100% restart success across the tested supervision strategies. 10,000 agents would require about 170 MB total in the validation environment (M1 Mac Pro). These figures support the larger point: a Python-first developer experience can sit on top of a much lighter and more fault-tolerant orchestration substrate than conventional Python thread- or process-heavy designs.

What Pyre changes

| Metric | Whitepaper result | Why it matters |

|---|---|---|

| Incremental memory per agent | 3.77 KB | Makes high-density agent systems economical |

| Throughput per bridge connection | 42,940 msg/s | The bridge is unlikely to be the bottleneck for LLM workloads |

| Median / p99 bridge latency | 0.11 ms / 0.20 ms | Cross-runtime calls are negligible relative to LLM latency |

| Cold start | 611 ms | Acceptable for long-running services |

| Recovery success | 100% in tested scenarios | Supervision is part of the runtime, not app boilerplate |

| 10,000-agent projection | ≈170 MB total | Large concurrent fleets become practical on ordinary machines |

That is why Pyre matters in the context of agentic SaaS. The argument is not simply that more agents need less memory, and not that every AI product needs a new runtime. It is that when software becomes a system of long-lived, concurrent, failure-prone processes acting on behalf of users, runtime design becomes part of product design. The moat in agentic SaaS is not just the model or the interface, but the trusted environment in which work is executed. Pyre is an architectural bet on that future: Python for agent logic, Elixir for orchestration, and BEAM-grade supervision underneath.